GPUs are the new currency in the current AI goldrush. But for many startups and independent researchers, high-performance compute – such as the much-sought-after NVIDIA A100s or H100s – often comes with eye-watering costs, inflexible multi-year contracts and endless discussions with sales reps.

Thunder Compute is here to change that story. The startup just announced a $4.5 million seed round led by Matrix Partners, with heavy hitters like Y Combinator and Preston-Werner Ventures joining the mix.

But this is not just another funding story; it’s a change in how we think about AI infrastructure.

Why Thunder Compute is Different (It’s the Software, Stupid!)

GPUs are treated as hardware commodities by most cloud providers. Thunder Compute considers them a software offering. The platform was built by infrastructure engineers who actually build on these systems and is focused on solving the “friction” of AI development.

1. Best Price in the Industry

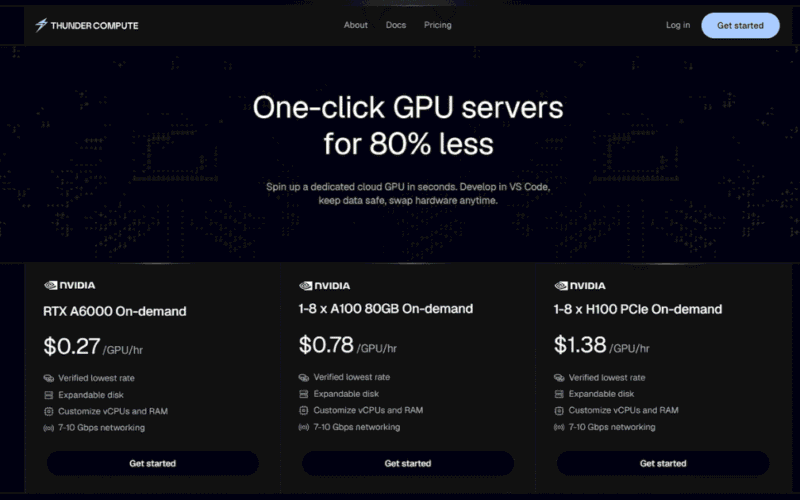

Now for the numbers. Thunder Compute claims the cheapest on-demand A100 and H100 instances in the market. At a time when “GPU burn” can kill a startup before it even gets its MVP to market, this price-to-performance ratio is a huge competitive advantage.

2. Designed for the Developer’s Workflow

Instead of just handing you a raw terminal and saying good luck, Thunder Compute integrates directly into the tools you already use:

VS Code & Cursor Integration. Write and debug code on an H100 like it is your local machine.

Ready-to-Code Environments – Hours spent battling with CUDA drivers are a thing of the past. Instances pre-installed with PyCharm and optimised environments.

Scale in Seconds More power? With new snapshot spins, you can scale up to 8 GPUs and 720GB of RAM.

No Sales Reps, No Bloat

The “Big Cloud” providers (you know who they are) are fat. They want you on the long term contracts and the complex enterprise tiers. Thunder Compute’s bet is simple. Compute should be frictionless. You should be able to create an account and have a live GPU terminal up and running in minutes, not days. They want to focus on “software ergonomics” instead of reselling hardware, and they want to reduce “time-to-train” so researchers can focus on their models and not on their servers.

The Verdict: What’s Next for AI Infrastructure

The race for better AI models will be won not simply by whoever has the most GPUs, but whoever can deploy them the fastest. Armed with $4.5 million in new capital and a developer first mindset, Thunder Compute is fast becoming the “go-to” for teams that want to move fast and stay lean.

Ready to fire up your first H100?

Check out Thunder Compute’s GPU instances